LLM Benchmarking Across Major AI Platforms

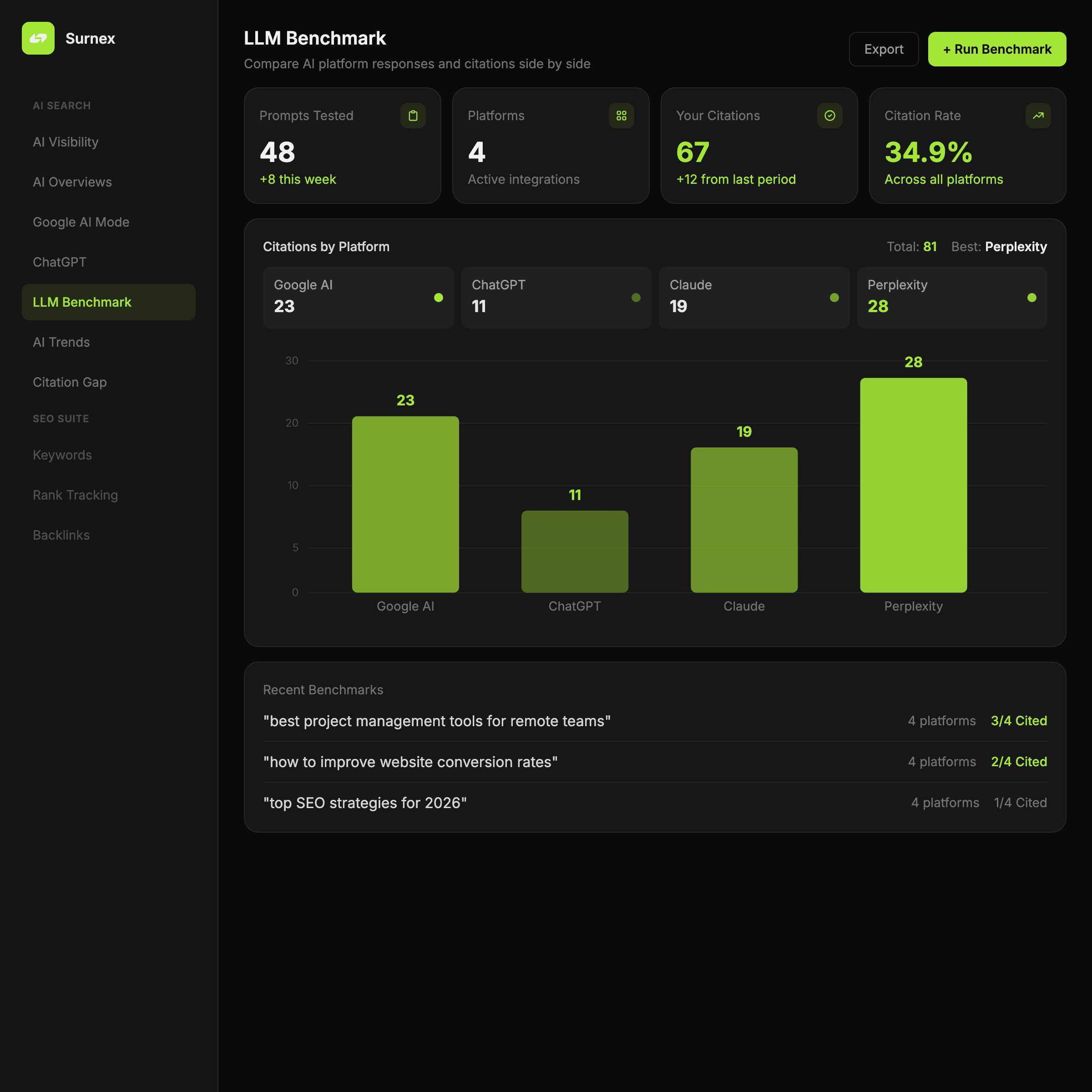

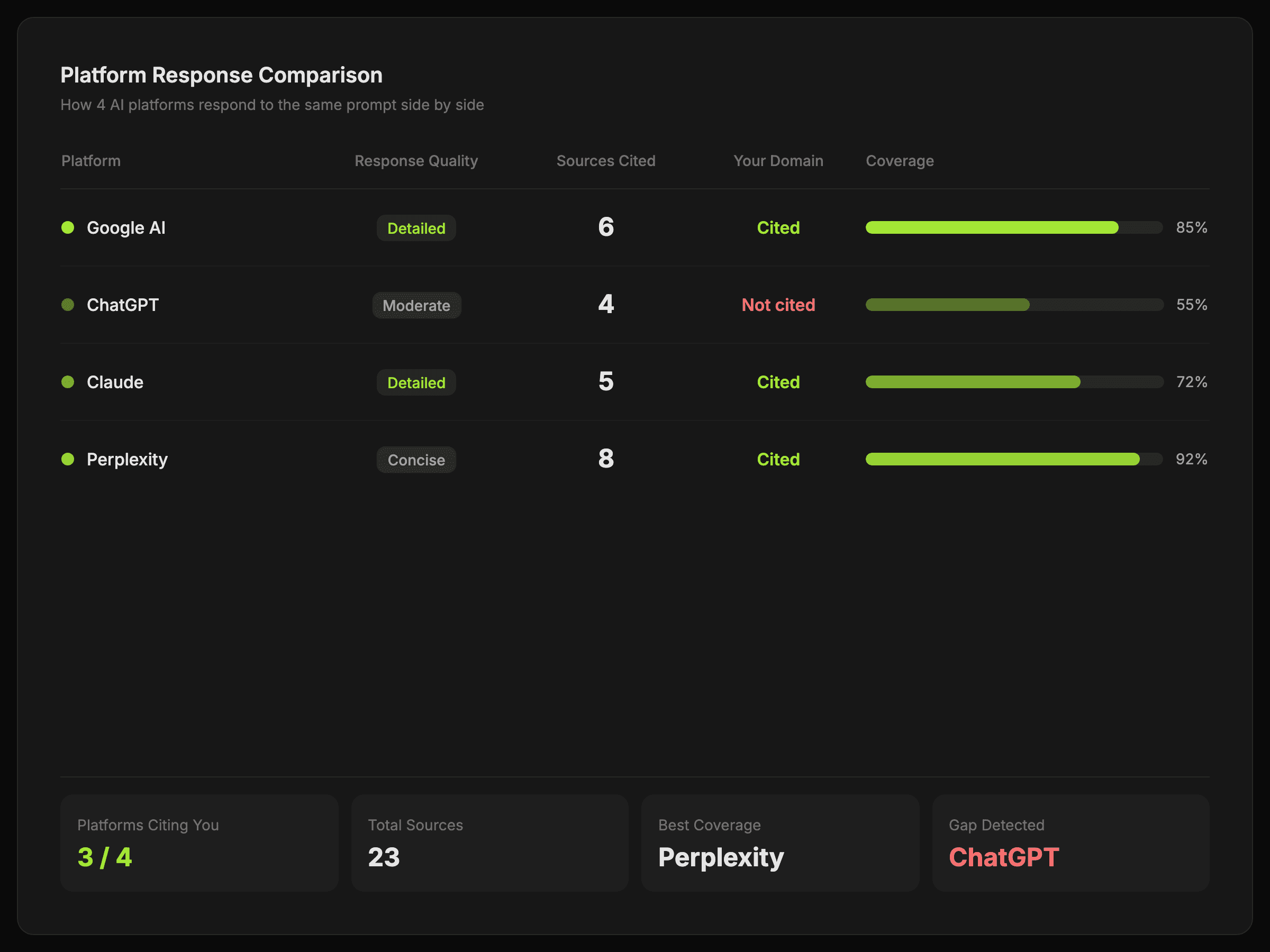

Compare how Google AI, ChatGPT, Claude, and Perplexity answer the same prompt and see where your domain earns citations.

Built for side-by-side model comparison

Compare answer framing, brand mentions, and citation patterns across the major AI platforms in one place.

See how major AI platforms answer the same question

Use LLM Benchmarking to compare responses side by side so your team can spot differences in mentions, citations, and framing.

Compare answers side by side

Review how Google AI, ChatGPT, Claude, and Perplexity respond to the same prompt without jumping between tools.

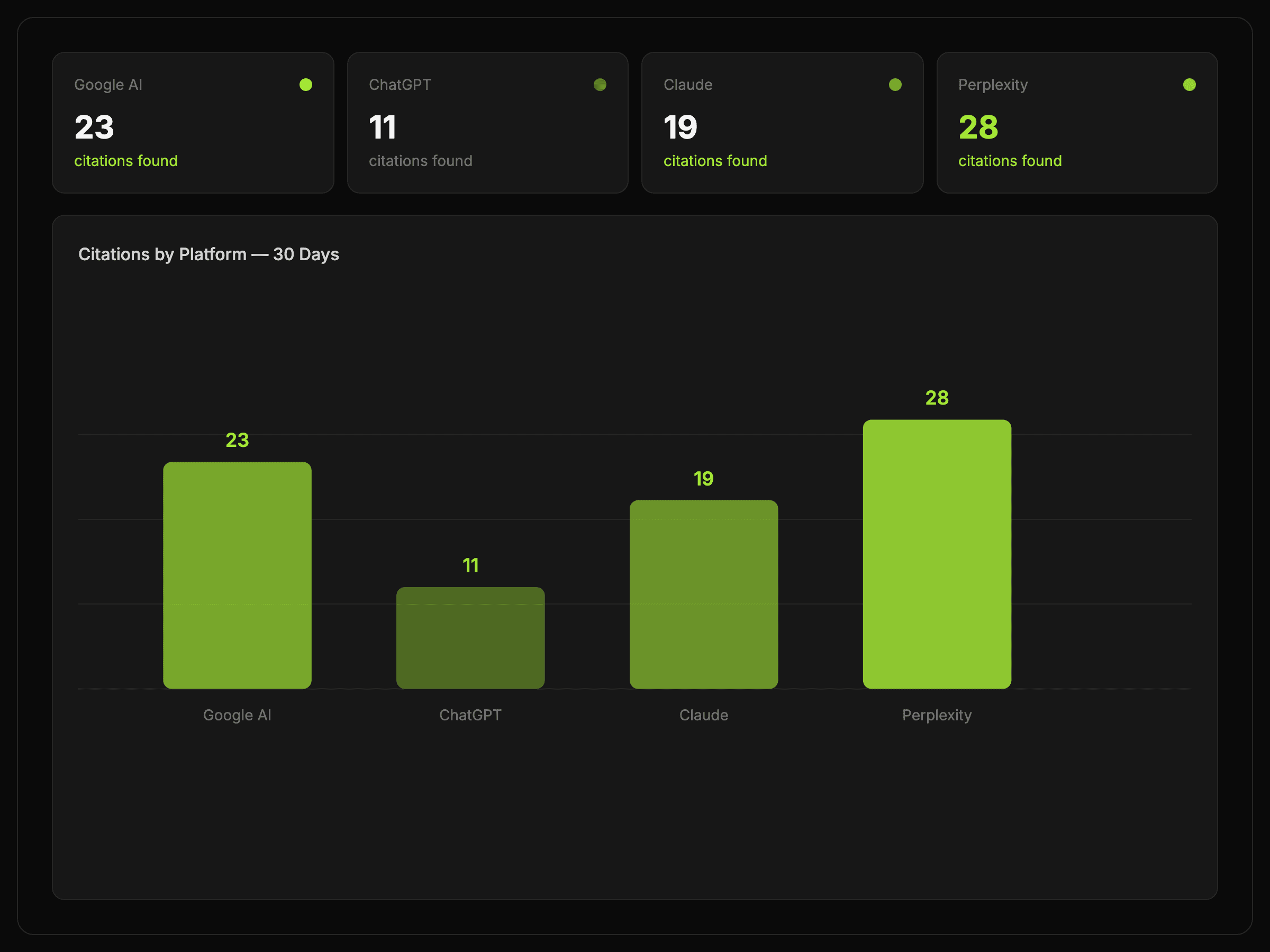

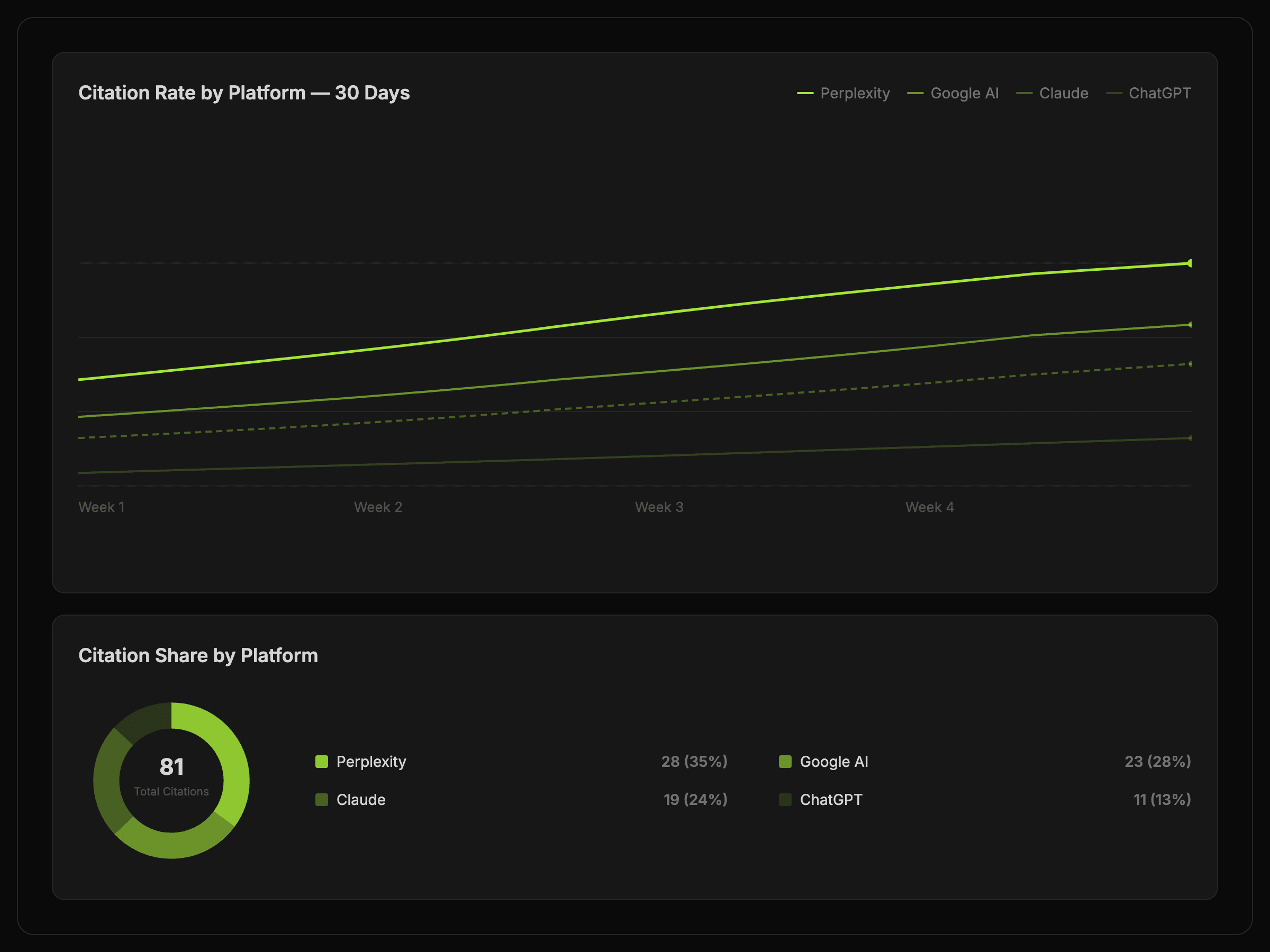

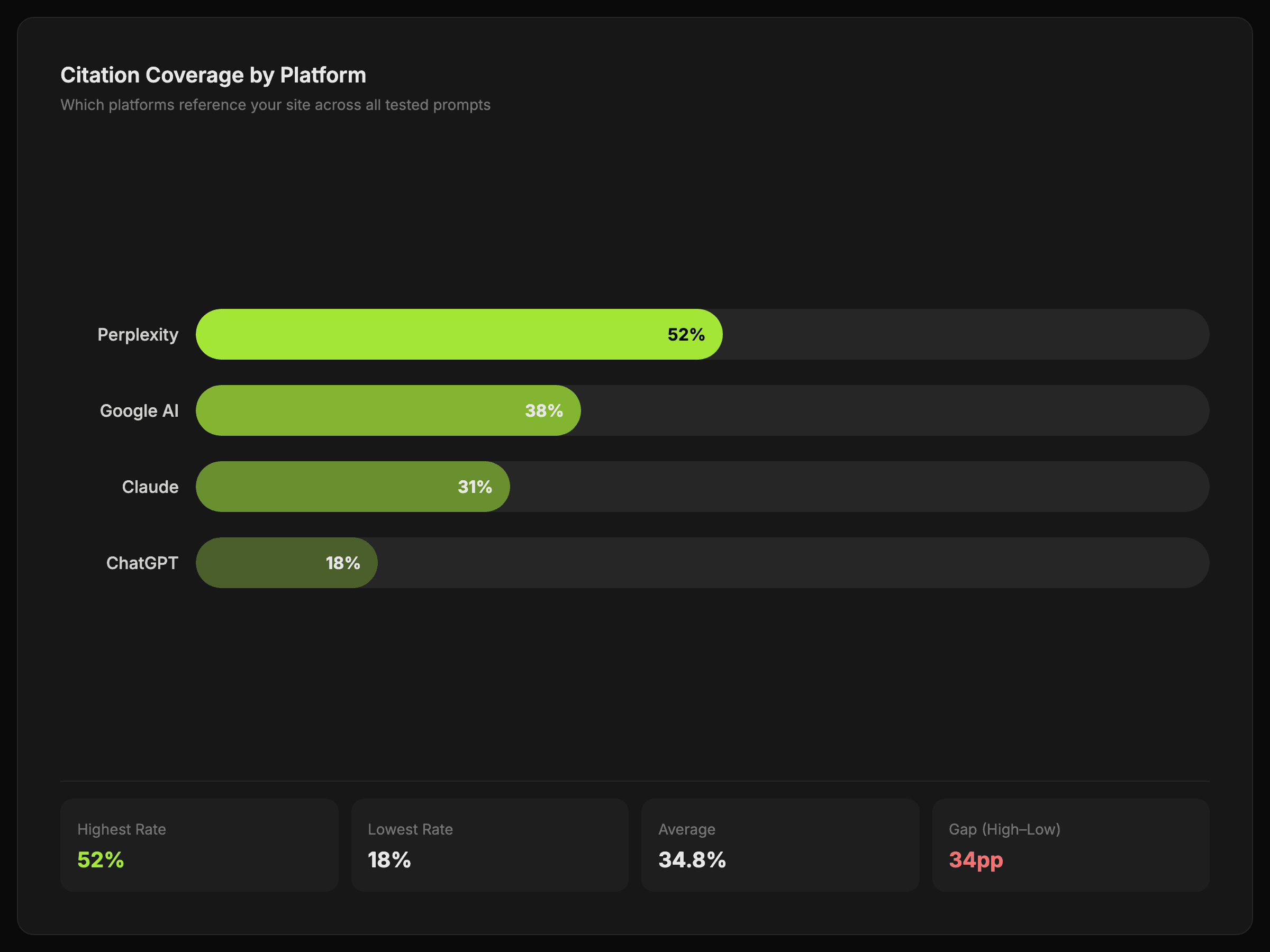

Check whether your domain is cited

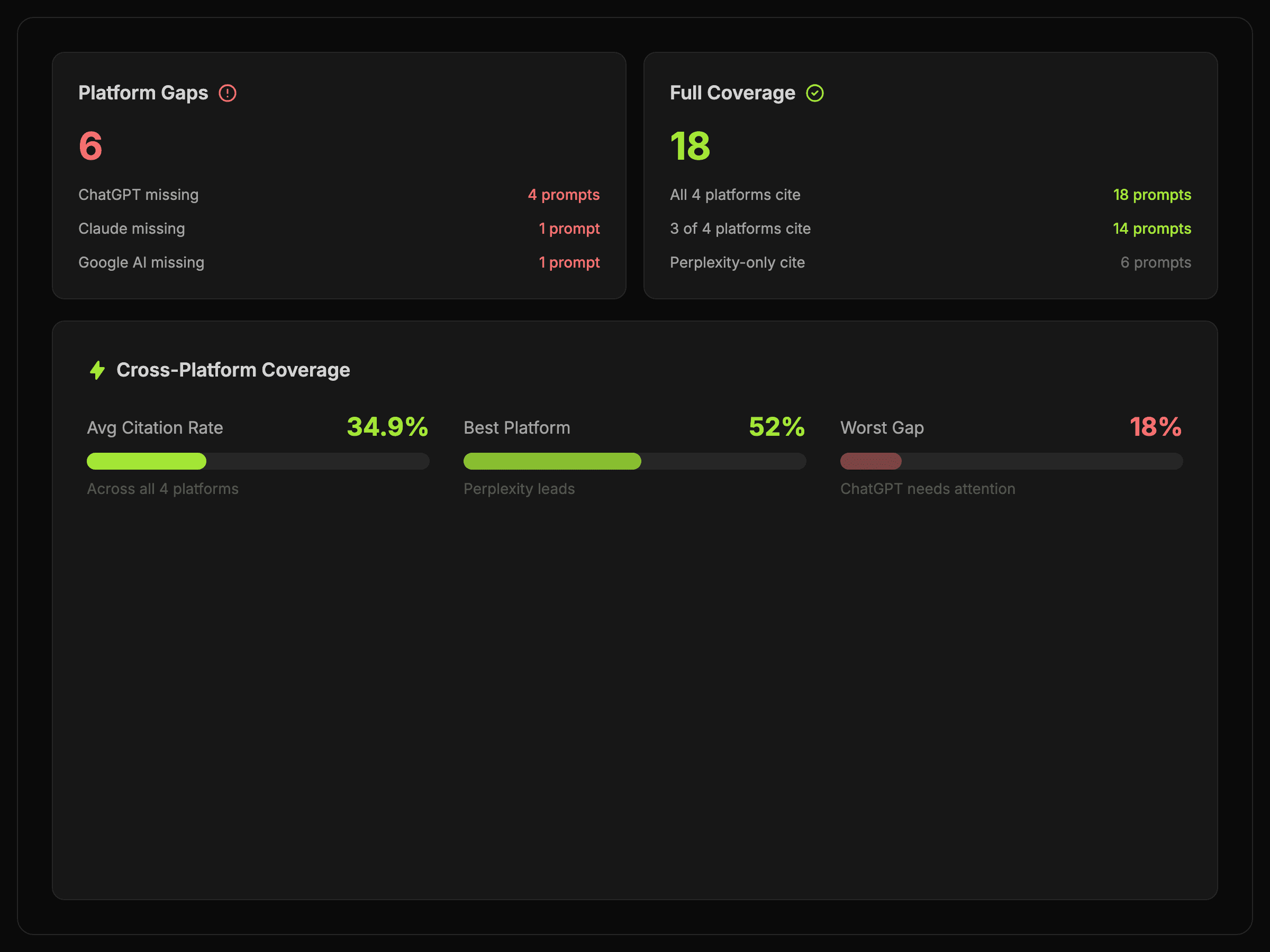

See which platforms reference your site, which favor competitors, and where citation visibility is strongest.

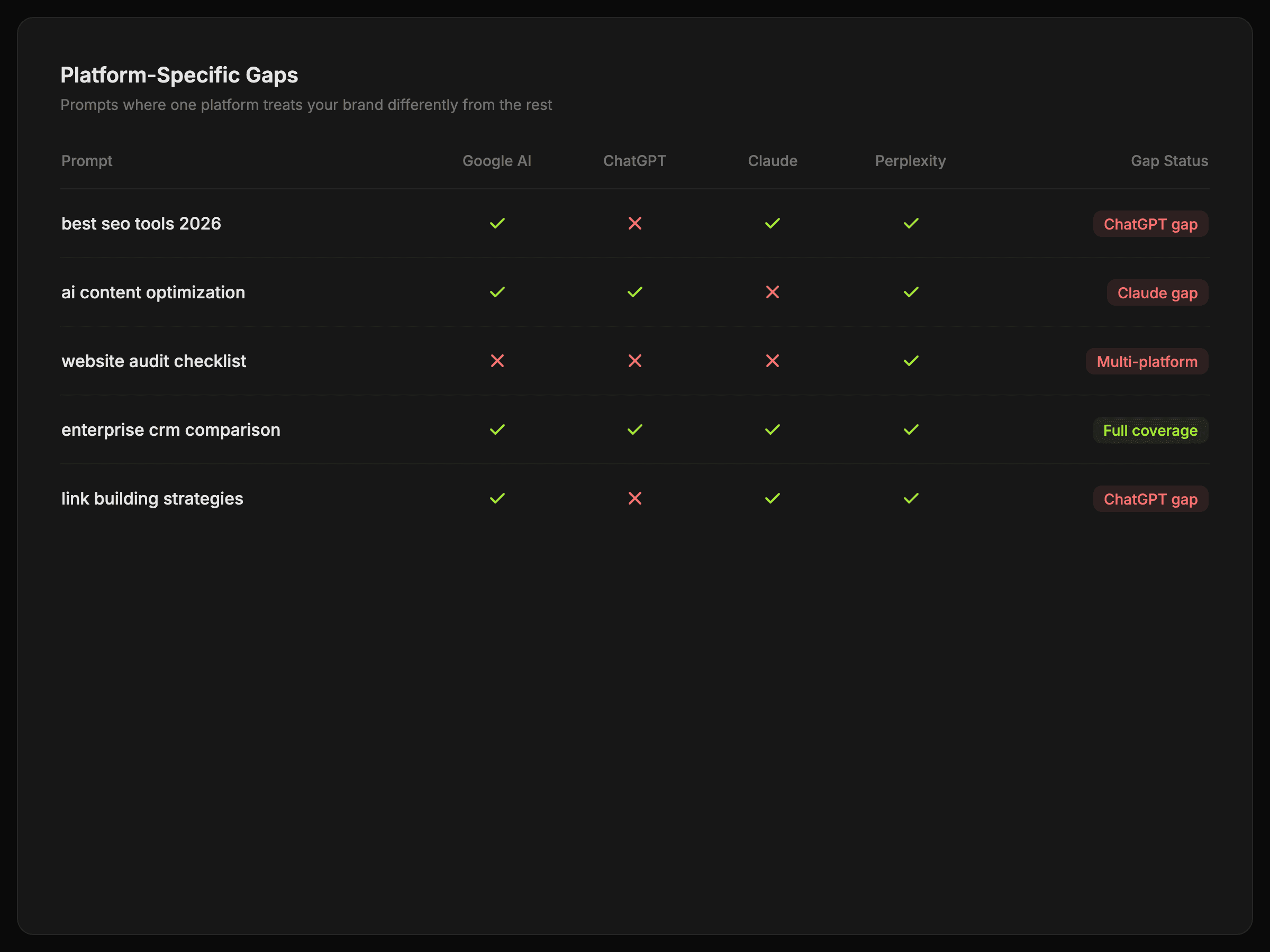

Spot platform-specific gaps

Find the topics and prompts where one platform treats your brand differently from the rest.

A clearer benchmark across AI platforms

Feedback from teams using Surnex to compare model behavior and citation coverage without turning it into a spreadsheet exercise.

“Surnex helps us compare Google AI, ChatGPT, Claude, and Perplexity side by side instead of reviewing them one at a time.”

Sarah Mitchell

Director, Growth Agency

“We can see where our domain is cited, where competitors are stronger, and how the framing changes by platform.”

Jason Reed

Head of Search, Northline Digital

“The benchmark makes platform differences obvious enough that we can decide what to fix next without overcomplicating it.”

Emma Clarke

Managing Director, Brightpath Media

LLM Benchmarking FAQ

Answers to the questions teams ask when they start comparing answer behavior across major AI platforms.

Still have questions? Contact our support

Start benchmarking AI answers side by side

Compare response framing, citations, and brand presence across the major AI platforms in one focused workflow.